April 24, 2026

What is Claude? What is Large Language Model (LLM)?

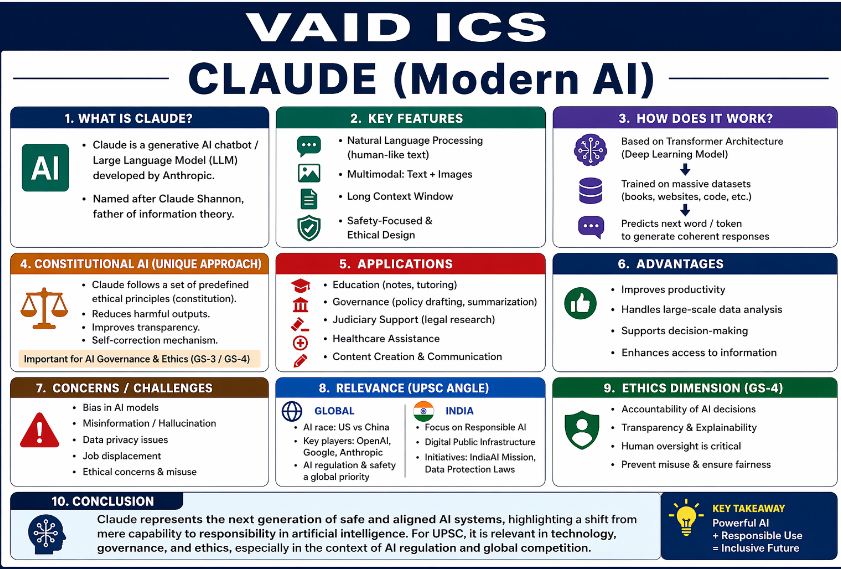

Claude is a sophisticated Large Language Model (LLM) and generative AI assistant developed by Anthropic, an AI safety and research company. Often positioned as a leading alternative to OpenAI’s ChatGPT and Google’s Gemini, Claude is designed with a specific focus on “Constitutional AI” to ensure responses are helpful, honest, and harmless.

The model is named after Claude Shannon, the American mathematician known as the “father of information theory.”

Core Technology and Architecture:

Claude is built upon the Transformer architecture, the same foundational technology behind most modern LLMs. It utilizes deep learning and neural networks to process massive datasets, allowing it to predict the next word in a sequence and generate human-like text.

-

Multimodal Capabilities: Modern versions (like the Claude 3.5 family) can process and analyze both text and visual inputs (images, charts, and diagrams).

-

Context Window: Claude is known for having an exceptionally large “context window,” enabling it to digest and summarize entire books or complex technical manuals in a single prompt.

Constitutional AI: The Anthropic Edge:

Unlike other models that rely solely on human feedback to learn boundaries, Claude uses Constitutional AI. This is a unique training method where the model is given a written “constitution” of ethical principles.

-

Self-Correction: The model evaluates its own responses against these principles (e.g., non-discrimination, privacy) before delivering them.

-

AI Governance: This approach is a significant case study for AI Ethics, as it reduces the reliance on constant human intervention to filter out harmful content.

Relevant Applications and Impact:

Claude’s utility spans several critical sectors, making it a versatile tool for both private and public use:

-

Governance & Law: Assisting in policy drafting, summarizing lengthy legislative documents, and conducting legal research.

-

Education: Acting as a personalized tutor and a tool for structured note-taking.

-

Healthcare: Supporting clinical documentation and data synthesis (under human supervision).

-

Data Analysis: Handling large-scale data sets to identify trends and support evidence-based decision-making.

Challenges and Ethical Considerations:

Despite its advanced safety features, Claude faces the same industry-wide hurdles as its peers:

| Challenge | Description |

| Hallucination | The tendency to generate facts that sound confident but are inaccurate. |

| Bias | Potential for reflecting societal biases present in the training data. |

| Data Privacy | Concerns regarding how sensitive information is handled during model interactions. |

| Socio-Economic Impact | The long-term potential for job displacement in cognitive-heavy sectors. |

Strategic Context: India and the Global Landscape:

In the global “AI arms race,” Claude represents the “safety-first” philosophy originating from the US tech ecosystem. For India, the rise of models like Claude intersects with several national priorities:

-

IndiaAI Mission: The national effort to build sovereign AI capacity and promote AI for all (AI for Gaon).

-

Responsible AI: Aligning with India’s push for ethical AI frameworks that protect citizen rights while fostering innovation.

-

Digital Public Infrastructure: Integrating AI to enhance services like the Unified Payments Interface (UPI) or health portals.

Claude represents a shift in the AI industry from capability-at-all-costs to alignment-focused development. By embedding ethical guardrails directly into the training process, it serves as a model for how artificial intelligence can be scaled responsibly while maintaining high performance.

October 17, 2025

October 16, 2025

October 6, 2025

September 24, 2025